Sample size, number of categories and sampling assumptions: Exploring some differences between categorization and generalization

Abstract

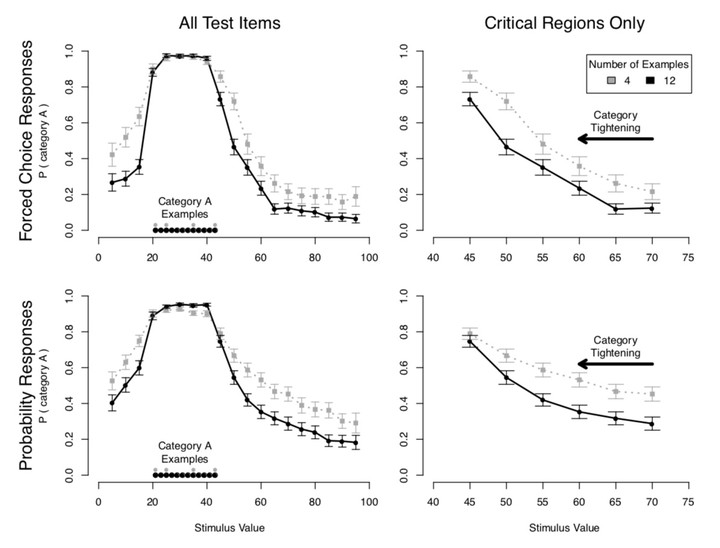

Categorization and generalization are fundamentally related inference prob- lems. Yet leading computational models of categorization (as exemplified by, e.g., Nosofsky, 1986) and generalization (as exemplified by, e.g., Tenenbaum & Griffiths, 2001) make qualitatively different predictions about how inference should change as a function of the number of items. Assuming all else is equal, categorization models predict that increasing the number of items in a category increases the chance of assigning a new item to that category; generalization models predict a decrease, or category tightening with additional exemplars. This paper investigates this discrepancy, showing that people do indeed perform qualitatively differently in categorization and generalization tasks even when all superficial elements of the task are kept constant. Furthermore, the effect of category frequency on generalization is moderated by assumptions about how the items are sampled. We show that neither model naturally accounts for the pattern of behavior across both categorization and generalization tasks, and discuss theoretical extensions of these frameworks to account for the importance of category frequency and sampling assumptions.